The Dockerfile below is used to add Filebeat configuration files to the base Filebeat image and nothing more. sample definition of the exchange based on the JSON data sent by Filebeat. Filebeat ships with a sample Kibana dashboard that looks like this:Īs well as shipping Docker logs, I write the logs from my ASP.NET Core applications to disk (The best way to make sure you never lose log information) and then use Filebeat to ship these log files to ElasticSearch. Filebeat uses the Lumberjack protocol to send messages to a listener in ADI. You can then view these logs in a fully customizable Kibana dashboard.

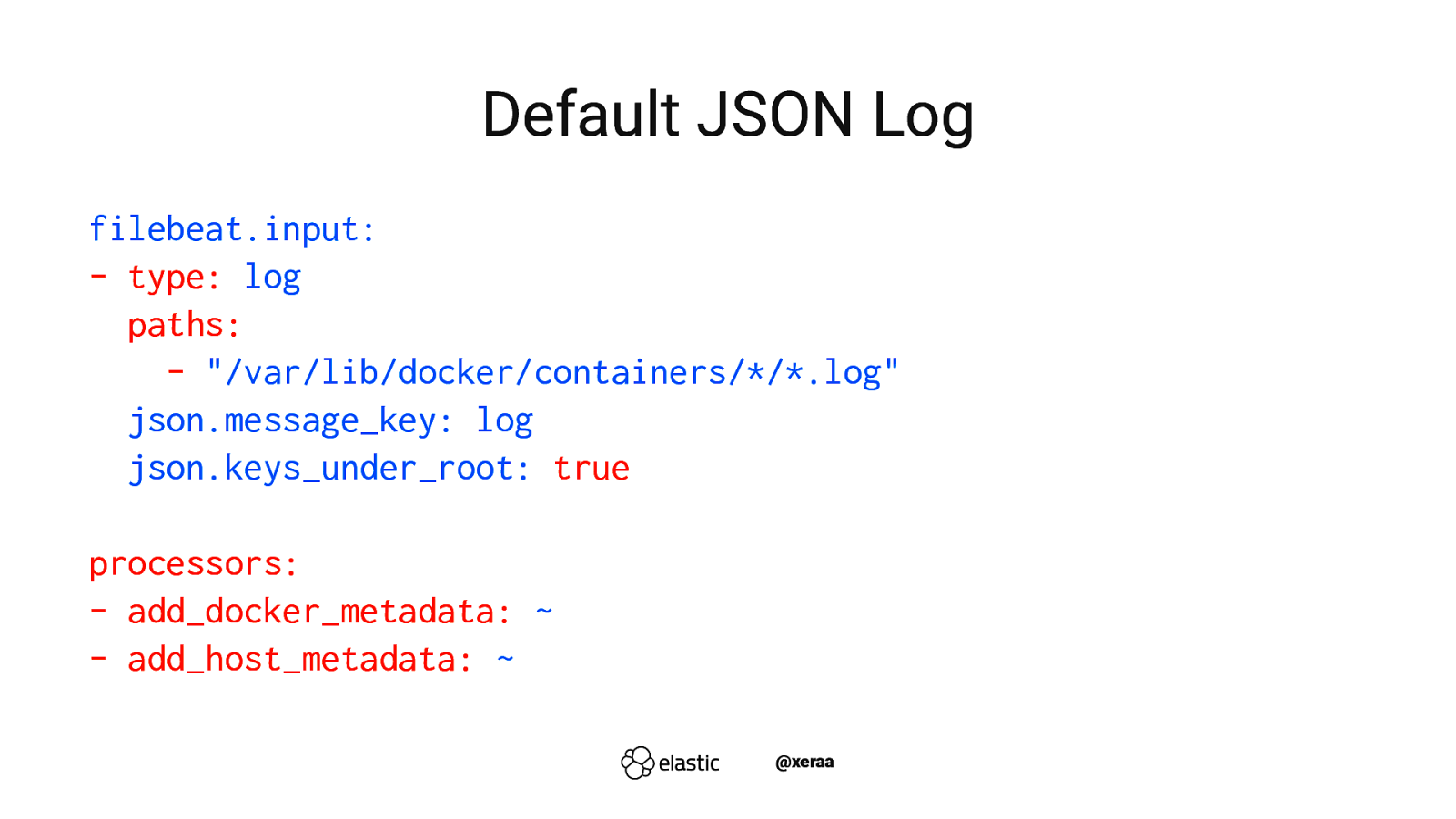

The latest version 6.0 queries Docker APIs and enriches these logs with the container name, image, labels, and so on which is a great feature, because you can then filter and search your logs by these properties. In terms of Beats, I use three of them which I'll talk about below: Filebeatįilebeat is a tool used to ship Docker log files to ElasticSearch.

There is a cost versus effort trade-off in this decision and it's up to you where you decide to go. Used a single key for 3 input records (PK FK), JSON. The primary key and foreign key of each input group should be connected from the same port in order to keep the hierarchy on the same root. I had a look at the ElasticSearch Docker container and if you really want to go down the Docker route and create an ElasticSearch cluster, it looks fairly straightforward but a bit unorthodox. It is noticed that a different key was passing to the DataProcessor (DP) as input which leads to a new JSON entry for each relational record. Using a hosted version takes some of the pain out of maintaining ElasticSearch. While you could use Docker to host ElasticSearch and Kibana, I use the ElasticCloud at work, you could also use instances hosted by AWS and Azure. There are several Beats which are used to ship data into ElasticSearch from various sources. Kibana is a visualization took that gives you a nice UI to view all of your data and produce nice visualizations and dashboards. To collect logs from my Swarm and monitor the health of it, I use the ELK-B stack which is made up of four pieces of software called ElasticSearch, LogStash (I recommend that you use Beats instead of LogStash), Kibana and various Beats.ĮlasticSearch is basically a No-SQL database that is geared towards storing JSON documents and searching across them. Docker logs wrapped in JSON or fluentd logs wrapped in EVEN MORE JSON are a. Knowing what is happening in Docker and in your applications running on Docker is critical. Fluentd is an open source data collector providing a unified logging layer. Useful Docker Images - Administering Docker.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed